HTML

Living Standard — Last Updated 3 June 2026

Living Standard — Last Updated 3 June 2026

canvas elementPath2D objectsImageBitmap rendering contextOffscreenCanvas interfacecanvas elementscanvas elementSupport in all current engines.

Support in all current engines.

a elements, img elements with

usemap attributes, button elements,

input elements whose type attribute are in

the Checkbox or Radio Button states, input elements that are

buttons, and select elements with a multiple attribute or a display size greater than 1.width — Horizontal dimension

height — Vertical dimension

typedef (CanvasRenderingContext2D or ImageBitmapRenderingContext or WebGLRenderingContext or WebGL2RenderingContext or GPUCanvasContext ) RenderingContext ;

[Exposed =Window ]

interface HTMLCanvasElement : HTMLElement {

[HTMLConstructor ] constructor ();

[CEReactions ] attribute unsigned long width ;

[CEReactions ] attribute unsigned long height ;

RenderingContext ? getContext (DOMString contextId , optional any options = null );

USVString toDataURL (optional DOMString type = "image/png", optional any quality );

undefined toBlob (BlobCallback _callback , optional DOMString type = "image/png", optional any quality );

OffscreenCanvas transferControlToOffscreen ();

};

callback BlobCallback = undefined (Blob ? blob );The canvas element provides scripts with a resolution-dependent bitmap canvas,

which can be used for rendering graphs, game graphics, art, or other visual images on the fly.

Authors should not use the canvas element in a document when a more suitable

element is available. For example, it is inappropriate to use a canvas element to

render a page heading: if the desired presentation of the heading is graphically intense, it

should be marked up using appropriate elements (typically h1) and then styled using

CSS and supporting technologies such as shadow trees.

When authors use the canvas element, they must also provide content that, when

presented to the user, conveys essentially the same function or purpose as the

canvas's bitmap. This content may be placed as content of the canvas

element. The contents of the canvas element, if any, are the element's fallback

content.

In interactive visual media, if scripting is enabled for

the canvas element, and if support for canvas elements has been enabled,

then the canvas element represents embedded content

consisting of a dynamically created image, the element's bitmap.

In non-interactive, static, visual media, if the canvas element has been

previously associated with a rendering context (e.g. if the page was viewed in an interactive

visual medium and is now being printed, or if some script that ran during the page layout process

painted on the element), then the canvas element represents

embedded content with the element's current bitmap and size. Otherwise, the element

represents its fallback content instead.

In non-visual media, and in visual media if scripting is

disabled for the canvas element or if support for canvas elements

has been disabled, the canvas element represents its fallback

content instead.

When a canvas element represents embedded content, the

user can still focus descendants of the canvas element (in the fallback

content). When an element is focused, it is the target of keyboard interaction

events (even though the element itself is not visible). This allows authors to make an interactive

canvas keyboard-accessible: authors should have a one-to-one mapping of interactive regions to focusable areas in the fallback content. (Focus has no

effect on mouse interaction events.) [UIEVENTS]

An element whose nearest canvas element ancestor is being rendered

and represents embedded content is an element that is being used as

relevant canvas fallback content.

The canvas element has two attributes to control the size of the element's bitmap:

width and height. These attributes,

when specified, must have values that are valid

non-negative integers. The rules for parsing non-negative

integers must be used to obtain their numeric

values. If an attribute is missing, or if parsing its value returns an error, then the

default value must be used instead. The width

attribute defaults to 300, and the height attribute

defaults to 150.

When setting the value of the width or height attribute, if the context mode of the canvas

element is set to placeholder, the

user agent must throw an "InvalidStateError" DOMException

and leave the attribute's value unchanged.

The natural dimensions of the canvas element when it

represents embedded content are equal to the dimensions of the

element's bitmap.

The user agent must use a square pixel density consisting of one pixel of image data per

coordinate space unit for the bitmaps of a canvas and its rendering contexts.

A canvas element can be sized arbitrarily by a style sheet, its

bitmap is then subject to the 'object-fit' CSS property.

The bitmaps of canvas elements, the bitmaps of ImageBitmap objects,

as well as some of the bitmaps of rendering contexts, such as those described in the sections on

the CanvasRenderingContext2D, OffscreenCanvasRenderingContext2D, and

ImageBitmapRenderingContext objects below, have an origin-clean flag, which can be set to true or false.

Initially, when the canvas element or ImageBitmap object is created, its

bitmap's origin-clean flag must be set to

true.

A canvas element can have a rendering context bound to it. Initially, it does not

have a bound rendering context. To keep track of whether it has a rendering context or not, and

what kind of rendering context it is, a canvas also has a canvas context mode, which is initially none but can be changed to either placeholder, 2d, bitmaprenderer, webgl, webgl2, or webgpu by algorithms defined in this specification.

When its canvas context mode is none, a canvas element has no rendering context,

and its bitmap must be transparent black with a natural width equal

to the numeric value of the element's width attribute and a natural height equal to

the numeric value of the element's height attribute, those values being interpreted in CSS pixels, and being updated as the attributes are set, changed, or

removed.

When its canvas context mode is placeholder, a canvas element has no

rendering context. It serves as a placeholder for an OffscreenCanvas object, and

the content of the canvas element is updated by the OffscreenCanvas

object's rendering context.

When a canvas element represents embedded content, it provides a

paint source whose width is the element's natural width, whose height

is the element's natural height, and whose appearance is the element's bitmap.

Whenever the width and height content attributes are set, removed, changed, or

redundantly set to the value they already have, then the user agent must perform the action

from the row of the following table that corresponds to the canvas element's context mode.

|

Action | |

|---|---|

|

Follow the steps to set bitmap

dimensions to the numeric values

of the | |

|

Follow the behavior defined in the WebGL specifications. [WEBGL] | |

|

Follow the behavior defined in WebGPU. [WEBGPU] | |

|

If the context's bitmap

mode is set to blank,

run the steps to set an | |

|

Do nothing. | |

|

Do nothing. |

Support in all current engines.

Support in all current engines.

The width

and height

IDL attributes must reflect the respective content attributes of the same name, with

the same defaults.

context = canvas.getContext(contextId [, options ])Support in all current engines.

Returns an object that exposes an API for drawing on the canvas. contextId

specifies the desired API: "2d", "bitmaprenderer", "webgl", "webgl2", or "webgpu". options is handled by that API.

This specification defines the "2d" and "bitmaprenderer" contexts below. The WebGL

specifications define the "webgl" and "webgl2" contexts. WebGPU defines the "webgpu" context. [WEBGL] [WEBGPU]

Returns null if contextId is not supported, or if the canvas has already been

initialized with another context type (e.g., trying to get a "2d" context after getting a "webgl" context).

The getContext(contextId, options)

method of the canvas element, when invoked, must run these steps:

If options is not an object, then set options to null.

Set options to the result of converting options to a JavaScript value.

Run the steps in the cell of the following table whose column header matches this

canvas element's canvas context

mode and whose row header matches contextId:

| none | 2d | bitmaprenderer | webgl or webgl2 | webgpu | placeholder | |

|---|---|---|---|---|---|---|

"2d"

|

| Return the same object as was returned the last time the method was invoked with this same first argument. | Return null. | Return null. | Return null. |

Throw an "InvalidStateError" DOMException.

|

"bitmaprenderer"

|

| Return null. | Return the same object as was returned the last time the method was invoked with this same first argument. | Return null. | Return null. |

Throw an "InvalidStateError" DOMException.

|

"webgl" or "webgl2", if the user agent supports the WebGL

feature in its current configuration

|

| Return null. | Return null. | Return the same object as was returned the last time the method was invoked with this same first argument. | Return null. |

Throw an "InvalidStateError" DOMException.

|

"webgpu", if the user

agent supports the WebGPU feature in its current configuration

|

| Return null. | Return null. | Return null. | Return the same object as was returned the last time the method was invoked with this same first argument. |

Throw an "InvalidStateError" DOMException.

|

| An unsupported value* | Return null. | Return null. | Return null. | Return null. | Return null. |

Throw an "InvalidStateError" DOMException.

|

* For example, the "webgl" or "webgl2" value in the case of a user agent having exhausted

the graphics hardware's abilities and having no software fallback implementation.

url = canvas.toDataURL([ type [, quality ] ])Support in all current engines.

Returns a data: URL for the image in the

canvas.

The first argument, if provided, controls the type of the image to be returned (e.g. PNG or

JPEG). The default is "image/png"; that type is also used if the given type isn't

supported. The second argument applies if the type is an image format that supports variable

quality (such as "image/jpeg"), and is a number in the range 0.0 to 1.0 inclusive

indicating the desired quality level for the resulting image.

When trying to use types other than "image/png", authors can check if the image

was really returned in the requested format by checking to see if the returned string starts

with one of the exact strings "data:image/png," or "data:image/png;". If it does, the image is PNG, and thus the requested type was

not supported. (The one exception to this is if the canvas has either no height or no width, in

which case the result might simply be "data:,".)

canvas.toBlob(callback [, type [, quality ] ])Support in all current engines.

Creates a Blob object representing a file containing the image in the canvas,

and invokes a callback with a handle to that object.

The second argument, if provided, controls the type of the image to be returned (e.g. PNG or

JPEG). The default is "image/png"; that type is also used if the given type isn't

supported. The third argument applies if the type is an image format that supports variable

quality (such as "image/jpeg"), and is a number in the range 0.0 to 1.0 inclusive

indicating the desired quality level for the resulting image.

canvas.transferControlToOffscreen()HTMLCanvasElement/transferControlToOffscreen

Support in all current engines.

Returns a newly created OffscreenCanvas object that uses the

canvas element as a placeholder. Once the canvas element has become a

placeholder for an OffscreenCanvas object, its natural size can no longer be

changed, and it cannot have a rendering context. The content of the placeholder canvas is

updated on the OffscreenCanvas's relevant agent's event loop's update the rendering

steps.

The toDataURL(type, quality) method,

when invoked, must run these steps:

If this canvas element's bitmap's origin-clean flag is set to false, then throw a

"SecurityError" DOMException.

If this canvas element's bitmap has no pixels (i.e. either its horizontal

dimension or its vertical dimension is zero), then return the string "data:,". (This is the shortest data: URL; it represents the empty string in a text/plain resource.)

Let file be a

serialization of this canvas element's bitmap as a file, passing

type and quality if given.

If file is null, then return "data:,".

The toBlob(callback, type,

quality) method, when invoked, must run these steps:

If this canvas element's bitmap's origin-clean flag is set to false, then throw a

"SecurityError" DOMException.

Let result be null.

If this canvas element's bitmap has pixels (i.e., neither its horizontal

dimension nor its vertical dimension is zero), then set result to a copy of this

canvas element's bitmap.

Run these steps in parallel:

If result is non-null, then set result to a serialization of result as a file with type and quality if given.

Queue an element task on the

canvas blob serialization task

source given the canvas element to run these steps:

If result is non-null, then set result to a new

Blob object, created in the relevant

realm of this canvas element, representing result.

[FILEAPI]

Invoke callback with

« result » and "report".

The transferControlToOffscreen() method,

when invoked, must run these steps:

If this canvas element's context

mode is not set to none, throw an

"InvalidStateError" DOMException.

Let offscreenCanvas be a new OffscreenCanvas object with its

width and height equal to the values of the width

and height content attributes of this

canvas element.

Set the offscreenCanvas's placeholder canvas element

to a weak reference to this canvas element.

Set this canvas element's context

mode to placeholder.

Set the offscreenCanvas's inherited language to the

language of this canvas element.

Set the offscreenCanvas's inherited direction to the directionality of this canvas element.

Return offscreenCanvas.

Support in all current engines.

Support in all current engines.

Support in all current engines.

Support in all current engines.

typedef (HTMLImageElement or

SVGImageElement ) HTMLOrSVGImageElement ;

typedef (HTMLOrSVGImageElement or

HTMLVideoElement or

HTMLCanvasElement or

ImageBitmap or

OffscreenCanvas or

VideoFrame ) CanvasImageSource ;

enum CanvasColorType { " unorm8 " , " float16 " };

enum CanvasFillRule { " nonzero " , " evenodd " };

dictionary CanvasRenderingContext2DSettings {

boolean alpha = true ;

boolean desynchronized = false ;

PredefinedColorSpace colorSpace = "srgb";

CanvasColorType colorType = "unorm8";

boolean willReadFrequently = false ;

};

enum ImageSmoothingQuality { " low " , " medium " , " high " };

[Exposed =Window ]

interface CanvasRenderingContext2D {

// back-reference to the canvas

readonly attribute HTMLCanvasElement canvas ;

};

CanvasRenderingContext2D includes CanvasSettings ;

CanvasRenderingContext2D includes CanvasState ;

CanvasRenderingContext2D includes CanvasTransform ;

CanvasRenderingContext2D includes CanvasCompositing ;

CanvasRenderingContext2D includes CanvasImageSmoothing ;

CanvasRenderingContext2D includes CanvasFillStrokeStyles ;

CanvasRenderingContext2D includes CanvasShadowStyles ;

CanvasRenderingContext2D includes CanvasFilters ;

CanvasRenderingContext2D includes CanvasRect ;

CanvasRenderingContext2D includes CanvasDrawPath ;

CanvasRenderingContext2D includes CanvasUserInterface ;

CanvasRenderingContext2D includes CanvasText ;

CanvasRenderingContext2D includes CanvasDrawImage ;

CanvasRenderingContext2D includes CanvasImageData ;

CanvasRenderingContext2D includes CanvasPathDrawingStyles ;

CanvasRenderingContext2D includes CanvasTextDrawingStyles ;

CanvasRenderingContext2D includes CanvasPath ;

interface mixin CanvasSettings {

// settings

CanvasRenderingContext2DSettings getContextAttributes ();

};

interface mixin CanvasState {

// state

undefined save (); // push state on state stack

undefined restore (); // pop state stack and restore state

undefined reset (); // reset the rendering context to its default state

boolean isContextLost (); // return whether context is lost

};

interface mixin CanvasTransform {

// transformations (default transform is the identity matrix)

undefined scale (unrestricted double x , unrestricted double y );

undefined rotate (unrestricted double angle );

undefined translate (unrestricted double x , unrestricted double y );

undefined transform (unrestricted double a , unrestricted double b , unrestricted double c , unrestricted double d , unrestricted double e , unrestricted double f );

[NewObject ] DOMMatrix getTransform ();

undefined setTransform (unrestricted double a , unrestricted double b , unrestricted double c , unrestricted double d , unrestricted double e , unrestricted double f );

undefined setTransform (optional DOMMatrix2DInit transform = {});

undefined resetTransform ();

};

interface mixin CanvasCompositing {

// compositing

attribute unrestricted double globalAlpha ; // (default 1.0)

attribute DOMString globalCompositeOperation ; // (default "source-over")

};

interface mixin CanvasImageSmoothing {

// image smoothing

attribute boolean imageSmoothingEnabled ; // (default true)

attribute ImageSmoothingQuality imageSmoothingQuality ; // (default low)

};

interface mixin CanvasFillStrokeStyles {

// colors and styles (see also the CanvasPathDrawingStyles and CanvasTextDrawingStyles interfaces)

attribute (DOMString or CanvasGradient or CanvasPattern ) strokeStyle ; // (default black)

attribute (DOMString or CanvasGradient or CanvasPattern ) fillStyle ; // (default black)

CanvasGradient createLinearGradient (double x0 , double y0 , double x1 , double y1 );

CanvasGradient createRadialGradient (double x0 , double y0 , double r0 , double x1 , double y1 , double r1 );

CanvasGradient createConicGradient (double startAngle , double x , double y );

CanvasPattern ? createPattern (CanvasImageSource image , [LegacyNullToEmptyString ] DOMString repetition );

};

interface mixin CanvasShadowStyles {

// shadows

attribute unrestricted double shadowOffsetX ; // (default 0)

attribute unrestricted double shadowOffsetY ; // (default 0)

attribute unrestricted double shadowBlur ; // (default 0)

attribute DOMString shadowColor ; // (default transparent black)

};

interface mixin CanvasFilters {

// filters

attribute DOMString filter ; // (default "none")

};

interface mixin CanvasRect {

// rects

undefined clearRect (unrestricted double x , unrestricted double y , unrestricted double w , unrestricted double h );

undefined fillRect (unrestricted double x , unrestricted double y , unrestricted double w , unrestricted double h );

undefined strokeRect (unrestricted double x , unrestricted double y , unrestricted double w , unrestricted double h );

};

interface mixin CanvasDrawPath {

// path API (see also CanvasPath)

undefined beginPath ();

undefined fill (optional CanvasFillRule fillRule = "nonzero");

undefined fill (Path2D path , optional CanvasFillRule fillRule = "nonzero");

undefined stroke ();

undefined stroke (Path2D path );

undefined clip (optional CanvasFillRule fillRule = "nonzero");

undefined clip (Path2D path , optional CanvasFillRule fillRule = "nonzero");

boolean isPointInPath (unrestricted double x , unrestricted double y , optional CanvasFillRule fillRule = "nonzero");

boolean isPointInPath (Path2D path , unrestricted double x , unrestricted double y , optional CanvasFillRule fillRule = "nonzero");

boolean isPointInStroke (unrestricted double x , unrestricted double y );

boolean isPointInStroke (Path2D path , unrestricted double x , unrestricted double y );

};

interface mixin CanvasUserInterface {

undefined drawFocusIfNeeded (Element element );

undefined drawFocusIfNeeded (Path2D path , Element element );

};

interface mixin CanvasText {

// text (see also the CanvasPathDrawingStyles and CanvasTextDrawingStyles interfaces)

undefined fillText (DOMString text , unrestricted double x , unrestricted double y , optional unrestricted double maxWidth );

undefined strokeText (DOMString text , unrestricted double x , unrestricted double y , optional unrestricted double maxWidth );

TextMetrics measureText (DOMString text );

};

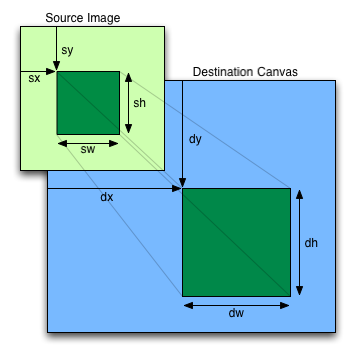

interface mixin CanvasDrawImage {

// drawing images

undefined drawImage (CanvasImageSource image , unrestricted double dx , unrestricted double dy );

undefined drawImage (CanvasImageSource image , unrestricted double dx , unrestricted double dy , unrestricted double dw , unrestricted double dh );

undefined drawImage (CanvasImageSource image , unrestricted double sx , unrestricted double sy , unrestricted double sw , unrestricted double sh , unrestricted double dx , unrestricted double dy , unrestricted double dw , unrestricted double dh );

};

interface mixin CanvasImageData {

// pixel manipulation

ImageData createImageData ([EnforceRange ] long sw , [EnforceRange ] long sh , optional ImageDataSettings settings = {});

ImageData createImageData (ImageData imageData );

ImageData getImageData ([EnforceRange ] long sx , [EnforceRange ] long sy , [EnforceRange ] long sw , [EnforceRange ] long sh , optional ImageDataSettings settings = {});

undefined putImageData (ImageData imageData , [EnforceRange ] long dx , [EnforceRange ] long dy );

undefined putImageData (ImageData imageData , [EnforceRange ] long dx , [EnforceRange ] long dy , [EnforceRange ] long dirtyX , [EnforceRange ] long dirtyY , [EnforceRange ] long dirtyWidth , [EnforceRange ] long dirtyHeight );

};

enum CanvasLineCap { "butt" , "round" , "square" };

enum CanvasLineJoin { "round" , "bevel" , "miter" };

enum CanvasTextAlign { " start " , " end " , " left " , " right " , " center " };

enum CanvasTextBaseline { " top " , " hanging " , " middle " , " alphabetic " , " ideographic " , " bottom " };

enum CanvasDirection { " ltr " , " rtl " , " inherit " };

enum CanvasFontKerning { " auto " , " normal " , " none " };

enum CanvasFontStretch { " ultra-condensed " , " extra-condensed " , " condensed " , " semi-condensed " , " normal " , " semi-expanded " , " expanded " , " extra-expanded " , " ultra-expanded " };

enum CanvasFontVariantCaps { " normal " , " small-caps " , " all-small-caps " , " petite-caps " , " all-petite-caps " , " unicase " , " titling-caps " };

enum CanvasTextRendering { " auto " , " optimizeSpeed " , " optimizeLegibility " , " geometricPrecision " };

interface mixin CanvasPathDrawingStyles {

// line caps/joins

attribute unrestricted double lineWidth ; // (default 1)

attribute CanvasLineCap lineCap ; // (default "butt")

attribute CanvasLineJoin lineJoin ; // (default "miter")

attribute unrestricted double miterLimit ; // (default 10)

// dashed lines

undefined setLineDash (sequence <unrestricted double > segments ); // default empty

sequence <unrestricted double > getLineDash ();

attribute unrestricted double lineDashOffset ;

};

interface mixin CanvasTextDrawingStyles {

// text

attribute DOMString lang ; // (default: "inherit")

attribute DOMString font ; // (default 10px sans-serif)

attribute CanvasTextAlign textAlign ; // (default: "start")

attribute CanvasTextBaseline textBaseline ; // (default: "alphabetic")

attribute CanvasDirection direction ; // (default: "inherit")

attribute DOMString letterSpacing ; // (default: "0px")

attribute CanvasFontKerning fontKerning ; // (default: "auto")

attribute CanvasFontStretch fontStretch ; // (default: "normal")

attribute CanvasFontVariantCaps fontVariantCaps ; // (default: "normal")

attribute CanvasTextRendering textRendering ; // (default: "auto")

attribute DOMString wordSpacing ; // (default: "0px")

};

interface mixin CanvasPath {

// shared path API methods

undefined closePath ();

undefined moveTo (unrestricted double x , unrestricted double y );

undefined lineTo (unrestricted double x , unrestricted double y );

undefined quadraticCurveTo (unrestricted double cpx , unrestricted double cpy , unrestricted double x , unrestricted double y );

undefined bezierCurveTo (unrestricted double cp1x , unrestricted double cp1y , unrestricted double cp2x , unrestricted double cp2y , unrestricted double x , unrestricted double y );

undefined arcTo (unrestricted double x1 , unrestricted double y1 , unrestricted double x2 , unrestricted double y2 , unrestricted double radius );

undefined rect (unrestricted double x , unrestricted double y , unrestricted double w , unrestricted double h );

undefined roundRect (unrestricted double x , unrestricted double y , unrestricted double w , unrestricted double h , optional (unrestricted double or DOMPointInit or sequence <(unrestricted double or DOMPointInit )>) radii = 0);

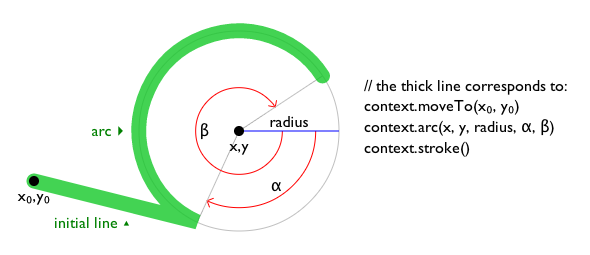

undefined arc (unrestricted double x , unrestricted double y , unrestricted double radius , unrestricted double startAngle , unrestricted double endAngle , optional boolean counterclockwise = false );

undefined ellipse (unrestricted double x , unrestricted double y , unrestricted double radiusX , unrestricted double radiusY , unrestricted double rotation , unrestricted double startAngle , unrestricted double endAngle , optional boolean counterclockwise = false );

};

[Exposed =(Window ,Worker )]

interface CanvasGradient {

// opaque object

undefined addColorStop (double offset , DOMString color );

};

[Exposed =(Window ,Worker )]

interface CanvasPattern {

// opaque object

undefined setTransform (optional DOMMatrix2DInit transform = {});

};

[Exposed =(Window ,Worker )]

interface TextMetrics {

// x-direction

readonly attribute double width ; // advance width

readonly attribute double actualBoundingBoxLeft ;

readonly attribute double actualBoundingBoxRight ;

// y-direction

readonly attribute double fontBoundingBoxAscent ;

readonly attribute double fontBoundingBoxDescent ;

readonly attribute double actualBoundingBoxAscent ;

readonly attribute double actualBoundingBoxDescent ;

readonly attribute double emHeightAscent ;

readonly attribute double emHeightDescent ;

readonly attribute double hangingBaseline ;

readonly attribute double alphabeticBaseline ;

readonly attribute double ideographicBaseline ;

};

[Exposed =(Window ,Worker )]

interface Path2D {

constructor (optional (Path2D or DOMString ) path );

undefined addPath (Path2D path , optional DOMMatrix2DInit transform = {});

};

Path2D includes CanvasPath ;To maintain compatibility with existing web content, user agents need to

enumerate methods defined in CanvasUserInterface immediately after the stroke() method on CanvasRenderingContext2D

objects.

context = canvas.getContext('2d' [, { [ alpha: true ] [, desynchronized: false ] [, colorSpace: 'srgb'] [, willReadFrequently: false ]} ])Returns a CanvasRenderingContext2D object that is permanently bound to a

particular canvas element.

If the alpha member is

false, then the context is forced to always be opaque.

If the desynchronized member is

true, then the context might be desynchronized.

The colorSpace member

specifies the color space of the rendering

context.

The colorType member

specifies the color type of the rendering

context.

If the willReadFrequently

member is true, then the context is marked for readback optimization.

context.canvasCanvasRenderingContext2D/canvas

Support in all current engines.

Returns the canvas element.

attributes = context.getContextAttributes()Returns an object whose:

alpha member is true if the context has an alpha

component that is not 1.0; otherwise false.desynchronized

member is true if the context can be desynchronized.colorSpace member is

a string indicating the context's color

space.colorType member is

a string indicating the context's color

type.willReadFrequently

member is true if the context is marked for readback

optimization.The CanvasRenderingContext2D 2D rendering context represents a flat linear

Cartesian surface whose origin (0,0) is at the top left corner, with the coordinate space having

x values increasing when going right, and y values increasing when going

down. The x-coordinate of the right-most edge is equal to the width of the rendering

context's output bitmap in CSS pixels; similarly, the

y-coordinate of the bottom-most edge is equal to the height of the rendering context's

output bitmap in CSS pixels.

The size of the coordinate space does not necessarily represent the size of the actual bitmaps that the user agent will use internally or during rendering. On high-definition displays, for instance, the user agent may internally use bitmaps with four device pixels per unit in the coordinate space, so that the rendering remains at high quality throughout. Anti-aliasing can similarly be implemented using oversampling with bitmaps of a higher resolution than the final image on the display.

Using CSS pixels to describe the size of a rendering context's output bitmap does not mean that when rendered the canvas will cover an equivalent area in CSS pixels. CSS pixels are reused for ease of integration with CSS features, such as text layout.

In other words, the canvas element below's rendering context has a 200x200

output bitmap (which internally uses CSS pixels as a

unit for ease of integration with CSS) and is rendered as 100x100 CSS

pixels:

< canvas width = 200 height = 200 style = width:100px;height:100px > The 2D context creation algorithm, which is passed a target (a

canvas element) and options, consists of running these steps:

Let settings be the result of converting options to the dictionary type

CanvasRenderingContext2DSettings. (This can throw an exception.)

Let context be a new CanvasRenderingContext2D object.

Initialize context's canvas

attribute to point to target.

Set context's output bitmap to the same bitmap as target's bitmap (so that they are shared).

Set bitmap dimensions to

the numeric values of target's width and height

content attributes.

Run the canvas settings output bitmap initialization algorithm, given context and settings.

Return context.

When the user agent is to set bitmap dimensions to width and height, it must run these steps:

Resize the output bitmap to the new width and height.

Let canvas be the canvas element to which the rendering

context's canvas attribute was initialized.

If the numeric value of

canvas's width content

attribute differs from width, then set canvas's width content attribute to the shortest possible string

representing width as a valid non-negative integer.

If the numeric value of

canvas's height content

attribute differs from height, then set canvas's height content attribute to the shortest possible string

representing height as a valid non-negative integer.

Only one square appears to be drawn in the following example:

// canvas is a reference to a <canvas> element

var context = canvas. getContext( '2d' );

context. fillRect( 0 , 0 , 50 , 50 );

canvas. setAttribute( 'width' , '300' ); // clears the canvas

context. fillRect( 0 , 100 , 50 , 50 );

canvas. width = canvas. width; // clears the canvas

context. fillRect( 100 , 0 , 50 , 50 ); // only this square remains The canvas attribute must return the value it was

initialized to when the object was created.

The CanvasColorType enumeration is used to specify the color type of the canvas's backing store.

The "unorm8" value indicates that the type

for all color components is 8-bit unsigned normalized.

The "float16" value indicates that the type

for all color components is 16-bit floating point.

The CanvasFillRule enumeration is used to select the fill rule

algorithm by which to determine if a point is inside or outside a path.

The "nonzero" value indicates the nonzero winding

rule, wherein

a point is considered to be outside a shape if the number of times a half-infinite straight

line drawn from that point crosses the shape's path going in one direction is equal to the

number of times it crosses the path going in the other direction.

The "evenodd" value indicates the even-odd rule,

wherein

a point is considered to be outside a shape if the number of times a half-infinite straight

line drawn from that point crosses the shape's path is even.

If a point is not outside a shape, it is inside the shape.

The ImageSmoothingQuality enumeration is used to express a preference for the

interpolation quality to use when smoothing images.

The "low" value indicates a preference

for a low level of image interpolation quality. Low-quality image interpolation may be more

computationally efficient than higher settings.

The "medium" value indicates a

preference for a medium level of image interpolation quality.

The "high" value indicates a preference

for a high level of image interpolation quality. High-quality image interpolation may be more

computationally expensive than lower settings.

Bilinear scaling is an example of a relatively fast, lower-quality image-smoothing algorithm. Bicubic or Lanczos scaling are examples of image-smoothing algorithms that produce higher-quality output. This specification does not mandate that specific interpolation algorithms be used.

This section is non-normative.

The output bitmap, when it is not directly displayed by the user agent,

implementations can, instead of updating this bitmap, merely remember the sequence of drawing

operations that have been applied to it until such time as the bitmap's actual data is needed

(for example because of a call to drawImage(), or

the createImageBitmap() factory method). In many

cases, this will be more memory efficient.

The bitmap of a canvas element is the one bitmap that's pretty much always going

to be needed in practice. The output bitmap of a rendering context, when it has one,

is always just an alias to a canvas element's bitmap.

Additional bitmaps are sometimes needed, e.g. to enable fast drawing when the canvas is being painted at a different size than its natural size, or to enable double buffering so that graphics updates, like page scrolling for example, can be processed concurrently while canvas draw commands are being executed.

A CanvasSettings object has an output bitmap that is initialized when

the object is created.

The output bitmap has an origin-clean flag, which can be set to true or false. Initially, when one of these bitmaps is created, its origin-clean flag must be set to true.

The CanvasSettings object also has an alpha boolean. When a CanvasSettings object's

alpha is false, then its alpha component must be fixed

to 1.0 (fully opaque) for all pixels, and attempts to change the alpha component of any pixel must

be silently ignored.

Thus, the bitmap of such a context starts off as opaque black instead

of transparent black; clearRect()

always results in opaque black pixels, every fourth byte from getImageData() is always 255, the putImageData() method effectively ignores every fourth

byte in its input, and so on. However, the alpha component of styles and images drawn onto the

canvas are still honoured up to the point where they would impact the output bitmap's

alpha component; for instance, drawing a 50% transparent white square on a freshly created

output bitmap with its alpha set to false

will result in a fully-opaque gray square.

The CanvasSettings object also has a desynchronized boolean. When a

CanvasSettings object's desynchronized is true, then the user agent may

optimize the rendering of the canvas to reduce the latency, as measured from input events to

rasterization, by desynchronizing the canvas paint cycle from the event loop, bypassing the

ordinary user agent rendering algorithm, or both. Insofar as this mode involves bypassing the

usual paint mechanisms, rasterization, or both, it might introduce visible tearing artifacts.

The user agent usually renders on a buffer which is not being displayed, quickly swapping it and the one being scanned out for presentation; the former buffer is called back buffer and the latter front buffer. A popular technique for reducing latency is called front buffer rendering, also known as single buffer rendering, where rendering happens in parallel and racily with the scanning out process. This technique reduces the latency at the price of potentially introducing tearing artifacts and can be used to implement in total or part of the desynchronized boolean. [MULTIPLEBUFFERING]

The desynchronized boolean can be useful when implementing certain kinds of applications, such as drawing applications, where the latency between input and rasterization is critical.

The CanvasSettings object also has a will read frequently boolean. When a

CanvasSettings object's will read

frequently is true, the user agent may optimize the canvas for readback operations.

On most devices the user agent needs to decide whether to store the canvas's

output bitmap on the GPU (this is also called "hardware accelerated"), or on the CPU

(also called "software"). Most rendering operations are more performant for accelerated canvases,

with the major exception being readback with getImageData(), toDataURL(), or toBlob(). CanvasSettings objects with will read frequently equal to true tell the

user agent that the webpage is likely to perform many readback operations and that it is

advantageous to use a software canvas.

The CanvasSettings object also has a color space setting of type

PredefinedColorSpace. The CanvasSettings object's color space indicates the color space for the

output bitmap.

The CanvasSettings object also has a color type setting of type

CanvasColorType. The CanvasSettings object's color type indicates the data type of the

color and alpha components of the pixels of the output bitmap.

To initialize a CanvasSettings output

bitmap, given a CanvasSettings context and a

CanvasRenderingContext2DSettings settings:

Set context's alpha to

settings["alpha"].

Set context's desynchronized to settings["desynchronized"].

Set context's color space to

settings["colorSpace"].

Set context's color type to

settings["colorType"].

Set context's will read

frequently to settings["willReadFrequently"].

The getContextAttributes() method

steps are to return «[ "alpha" →

this's alpha, "desynchronized" →

this's desynchronized, "colorSpace" → this's

color space, "colorType" → this's

color type, "willReadFrequently" →

this's will read frequently

]».

Objects that implement the CanvasState interface maintain a stack of drawing

states. Drawing states consist of:

The current transformation matrix.

The current clipping region.

The current letter spacing, word spacing, fill style, stroke style, filter, global alpha, compositing and blending operator, and shadow color.

The current values of the following attributes: lineWidth, lineCap, lineJoin, miterLimit, lineDashOffset, shadowOffsetX, shadowOffsetY, shadowBlur, lang, font, textAlign, textBaseline, direction, fontKerning, fontStretch, fontVariantCaps, textRendering, imageSmoothingEnabled, imageSmoothingQuality.

The current dash list.

The rendering context's bitmaps are not part of the drawing state, as they

depend on whether and how the rendering context is bound to a canvas element.

Objects that implement the CanvasState mixin have a context lost boolean, that is initialized to false

when the object is created. The context lost

value is updated in the context lost steps.

context.save()Support in all current engines.

Pushes the current state onto the stack.

context.restore()CanvasRenderingContext2D/restore

Support in all current engines.

Pops the top state on the stack, restoring the context to that state.

context.reset()Resets the rendering context, which includes the backing buffer, the drawing state stack, path, and styles.

context.isContextLost()Returns true if the rendering context was lost. Context loss can occur due to driver crashes, running out of memory, etc. In these cases, the canvas loses its backing storage and takes steps to reset the rendering context to its default state.

The save() method

steps are to push a copy of the current drawing state onto the drawing state stack.

The restore()

method steps are to pop the top entry in the drawing state stack, and reset the drawing state it

describes. If there is no saved state, then the method must do nothing.

CanvasRenderingContext2D/reset

OffscreenCanvasRenderingContext2D#canvasrenderingcontext2d.reset

The reset()

method steps are to reset the rendering context to its default state.

To reset the rendering context to its default state:

Clear canvas's bitmap to transparent black.

Empty the list of subpaths in context's current default path.

Clear the context's drawing state stack.

Reset everything that drawing state consists of to their initial values.

CanvasRenderingContext2D/isContextLost

Support in one engine only.

The isContextLost() method steps are to return

this's context lost.

context.lineWidth [ = value ]CanvasRenderingContext2D/lineWidth

Support in all current engines.

styles.lineWidth [ = value ]Returns the current line width.

Can be set, to change the line width. Values that are not finite values greater than zero are ignored.

context.lineCap [ = value ]CanvasRenderingContext2D/lineCap

Support in all current engines.

styles.lineCap [ = value ]Returns the current line cap style.

Can be set, to change the line cap style.

The possible line cap styles are "butt", "round", and "square". Other values are ignored.

context.lineJoin [ = value ]CanvasRenderingContext2D/lineJoin

Support in all current engines.

styles.lineJoin [ = value ]Returns the current line join style.

Can be set, to change the line join style.

The possible line join styles are "bevel", "round", and "miter". Other values are ignored.

context.miterLimit [ = value ]CanvasRenderingContext2D/miterLimit

Support in all current engines.

styles.miterLimit [ = value ]Returns the current miter limit ratio.

Can be set, to change the miter limit ratio. Values that are not finite values greater than zero are ignored.

context.setLineDash(segments)CanvasRenderingContext2D/setLineDash

Support in all current engines.

styles.setLineDash(segments)Sets the current line dash pattern (as used when stroking). The argument is a list of distances for which to alternately have the line on and the line off.

segments = context.getLineDash()CanvasRenderingContext2D/getLineDash

Support in all current engines.

segments = styles.getLineDash()Returns a copy of the current line dash pattern. The array returned will always have an even number of entries (i.e. the pattern is normalized).

context.lineDashOffsetCanvasRenderingContext2D/lineDashOffset

Support in all current engines.

styles.lineDashOffsetReturns the phase offset (in the same units as the line dash pattern).

Can be set, to change the phase offset. Values that are not finite values are ignored.

Objects that implement the CanvasPathDrawingStyles interface have attributes and

methods (defined in this section) that control how lines are treated by the object.

The lineWidth attribute gives the width of lines, in

coordinate space units. On getting, it must return the current value. On setting, zero, negative,

infinite, and NaN values must be ignored, leaving the value unchanged; other values must change

the current value to the new value.

When the object implementing the CanvasPathDrawingStyles interface is created, the

lineWidth attribute must initially have the value

1.0.

The lineCap attribute defines the type of endings that

UAs will place on the end of lines. The three valid values are "butt",

"round", and "square".

On getting, it must return the current value. On setting, the current value must be changed to the new value.

When the object implementing the CanvasPathDrawingStyles interface is created, the

lineCap attribute must initially have the value

"butt".

The lineJoin attribute defines the type of corners that

UAs will place where two lines meet. The three valid values are "bevel",

"round", and "miter".

On getting, it must return the current value. On setting, the current value must be changed to the new value.

When the object implementing the CanvasPathDrawingStyles interface is created, the

lineJoin attribute must initially have the value

"miter".

When the lineJoin attribute has the value "miter", strokes use the miter limit ratio to decide how to render joins. The

miter limit ratio can be explicitly set using the miterLimit

attribute. On getting, it must return the current value. On setting, zero, negative, infinite, and

NaN values must be ignored, leaving the value unchanged; other values must change the current

value to the new value.

When the object implementing the CanvasPathDrawingStyles interface is created, the

miterLimit attribute must initially have the value

10.0.

Each CanvasPathDrawingStyles object has a dash list, which is either

empty or consists of an even number of non-negative numbers. Initially, the dash list

must be empty.

The setLineDash(segments) method, when invoked, must run

these steps:

If any value in segments is not finite (e.g. an Infinity or a NaN value), or if any value is negative (less than zero), then return (without throwing an exception; user agents could show a message on a developer console, though, as that would be helpful for debugging).

If the number of elements in segments is odd, then let segments be the concatenation of two copies of segments.

Set the object's dash list to segments.

When the getLineDash() method is invoked, it must return a

sequence whose values are the values of the object's dash list, in the same

order.

It is sometimes useful to change the "phase" of the dash pattern, e.g. to achieve a "marching

ants" effect. The phase can be set using the lineDashOffset attribute. On getting, it must

return the current value. On setting, infinite and NaN values must be ignored, leaving the value

unchanged; other values must change the current value to the new value.

When the object implementing the CanvasPathDrawingStyles interface is created, the

lineDashOffset attribute must initially have

the value 0.0.

When a user agent is to trace a path, given an object style

that implements the CanvasPathDrawingStyles interface, it must run the following

algorithm. This algorithm returns a new path.

Let path be a copy of the path being traced.

Prune all zero-length line segments from path.

Remove from path any subpaths containing no lines (i.e. subpaths with just one point).

Replace each point in each subpath of path other than the first point and the last point of each subpath by a join that joins the line leading to that point to the line leading out of that point, such that the subpaths all consist of two points (a starting point with a line leading out of it, and an ending point with a line leading into it), one or more lines (connecting the points and the joins), and zero or more joins (each connecting one line to another), connected together such that each subpath is a series of one or more lines with a join between each one and a point on each end.

Add a straight closing line to each closed subpath in path connecting the last point and the first point of that subpath; change the last point to a join (from the previously last line to the newly added closing line), and change the first point to a join (from the newly added closing line to the first line).

If style's dash list is empty, then jump to the step labeled convert.

Let pattern width be the concatenation of all the entries of style's dash list, in coordinate space units.

For each subpath subpath in path, run the following substeps. These substeps mutate the subpaths in path in vivo.

Let subpath width be the length of all the lines of subpath, in coordinate space units.

Let offset be the value of style's lineDashOffset, in coordinate space

units.

While offset is greater than pattern width, decrement it by pattern width.

While offset is less than zero, increment it by pattern width.

Define L to be a linear coordinate line defined along all lines in subpath, such that the start of the first line in the subpath is defined as coordinate 0, and the end of the last line in the subpath is defined as coordinate subpath width.

Let position be 0 minus offset.

Let index be 0.

Let current state be off (the other states being on and zero-on).

Dash on: Let segment length be the value of style's dash list's indexth entry.

Increment position by segment length.

If position is greater than subpath width, then end these substeps for this subpath and start them again for the next subpath; if there are no more subpaths, then jump to the step labeled convert instead.

If segment length is nonzero, then let current state be on.

Increment index by one.

Dash off: Let segment length be the value of style's dash list's indexth entry.

Let start be the offset position on L.

Increment position by segment length.

If position is less than zero, then jump to the step labeled post-cut.

If start is less than zero, then let start be zero.

If position is greater than subpath width, then let end be the offset subpath width on L. Otherwise, let end be the offset position on L.

Jump to the first appropriate step:

Do nothing, just continue to the next step.

Cut the line on which end finds itself short at end and place a point there, cutting in two the subpath that it was in; remove all line segments, joins, points, and subpaths that are between start and end; and finally place a single point at start with no lines connecting to it.

The point has a directionality for the purposes of drawing line caps (see below). The directionality is the direction that the original line had at that point (i.e. when L was defined above).

Cut the line on which start finds itself into two at start and place a point there, cutting in two the subpath that it was in, and similarly cut the line on which end finds itself short at end and place a point there, cutting in two the subpath that it was in, and then remove all line segments, joins, points, and subpaths that are between start and end.

If start and end are the same point, then this results in just the line being cut in two and two points being inserted there, with nothing being removed, unless a join also happens to be at that point, in which case the join must be removed.

Post-cut: If position is greater than subpath width, then jump to the step labeled convert.

Increment index by one. If it is equal to the number of entries in style's dash list, then let index be 0.

Return to the step labeled dash on.

Convert: This is the step that converts the path to a new path that represents its stroke.

Create a new path that describes the edge of the areas

that would be covered if a straight line of length equal to style's

lineWidth was swept along each subpath in path while being kept at an angle such that the line is orthogonal to the path

being swept, replacing each point with the end cap necessary to satisfy style's lineCap attribute as

described previously and elaborated below, and replacing each join with the join necessary to

satisfy style's lineJoin

type, as defined below.

Caps: Each point has a flat edge perpendicular to the direction of the line

coming out of it. This is then augmented according to the value of style's lineCap. The "butt" value means that no additional line cap is added. The "round" value means that a semi-circle with the diameter equal to

style's lineWidth width must

additionally be placed on to the line coming out of each point. The "square" value means that a rectangle with the length of style's lineWidth width and the

width of half style's lineWidth width, placed flat against the edge

perpendicular to the direction of the line coming out of the point, must be added at each

point.

Points with no lines coming out of them must have two caps placed back-to-back as if it was really two points connected to each other by an infinitesimally short straight line in the direction of the point's directionality (as defined above).

Joins: In addition to the point where a join occurs, two additional points are relevant to each join, one for each line: the two corners found half the line width away from the join point, one perpendicular to each line, each on the side furthest from the other line.

A triangle connecting these two opposite corners with a straight line, with the third point

of the triangle being the join point, must be added at all joins. The lineJoin attribute controls whether anything else is

rendered. The three aforementioned values have the following meanings:

The "bevel" value means that this is all that is rendered at

joins.

The "round" value means that an arc connecting the two aforementioned

corners of the join, abutting (and not overlapping) the aforementioned triangle, with the

diameter equal to the line width and the origin at the point of the join, must be added at

joins.

The "miter" value means that a second triangle must (if it can given

the miter length) be added at the join, with one line being the line between the two

aforementioned corners, abutting the first triangle, and the other two being continuations of

the outside edges of the two joining lines, as long as required to intersect without going over

the miter length.

The miter length is the distance from the point where the join occurs to the intersection of

the line edges on the outside of the join. The miter limit ratio is the maximum allowed ratio of

the miter length to half the line width. If the miter length would cause the miter limit ratio

(as set by style's miterLimit attribute) to be exceeded, then this second

triangle must not be added.

The subpaths in the newly created path must be oriented such that for any point, the number of times a half-infinite straight line drawn from that point crosses a subpath is even if and only if the number of times a half-infinite straight line drawn from that same point crosses a subpath going in one direction is equal to the number of times it crosses a subpath going in the other direction.

Return the newly created path.

context.lang [ = value ]styles.lang [ = value ]Returns the current language setting.

Can be set to a BCP 47 language tag, the empty string, or "inherit", to change the language used when

resolving fonts. "inherit" takes the language

from the canvas element's language, or the associated document

element when there is no canvas element.

The default is "inherit".

context.font [ = value ]Support in all current engines.

styles.font [ = value ]Returns the current font settings.

Can be set, to change the font. The syntax is the same as for the CSS 'font' property; values that cannot be parsed as CSS font values are ignored. The default is "10px sans-serif".

Relative keywords and lengths are computed relative to the font of the canvas

element.

context.textAlign [ = value ]CanvasRenderingContext2D/textAlign

Support in all current engines.

styles.textAlign [ = value ]Returns the current text alignment settings.

Can be set, to change the alignment. The possible values are and their meanings are given

below. Other values are ignored. The default is "start".

context.textBaseline [ = value ]CanvasRenderingContext2D/textBaseline

Support in all current engines.

styles.textBaseline [ = value ]Returns the current baseline alignment settings.

Can be set, to change the baseline alignment. The possible values and their meanings are

given below. Other values are ignored. The default is "alphabetic".

context.direction [ = value ]CanvasRenderingContext2D/direction

Support in all current engines.

styles.direction [ = value ]Returns the current directionality.

Can be set, to change the directionality. The possible values and their meanings are given

below. Other values are ignored. The default is "inherit".

context.letterSpacing [ = value ]styles.letterSpacing [ = value ]Returns the current spacing between characters in the text.

Can be set, to change spacing between characters. Values that cannot be parsed as a CSS

<length> are ignored. The default is "0px".

context.fontKerning [ = value ]styles.fontKerning [ = value ]Returns the current font kerning settings.

Can be set, to change the font kerning. The possible values and their meanings are given

below. Other values are ignored. The default is "auto".

context.fontStretch [ = value ]styles.fontStretch [ = value ]Returns the current font stretch settings.

Can be set, to change the font stretch. The possible values and their meanings are given

below. Other values are ignored. The default is "normal".

context.fontVariantCaps [ = value ]styles.fontVariantCaps [ = value ]Returns the current font variant caps settings.

Can be set, to change the font variant caps. The possible values and their meanings are given

below. Other values are ignored. The default is "normal".

context.textRendering [ = value ]styles.textRendering [ = value ]Returns the current text rendering settings.

Can be set, to change the text rendering. The possible values and their meanings are given

below. Other values are ignored. The default is "auto".

context.wordSpacing [ = value ]styles.wordSpacing [ = value ]Returns the current spacing between words in the text.

Can be set, to change spacing between words. Values that cannot be parsed as a CSS

<length> are ignored. The default is "0px".

Objects that implement the CanvasTextDrawingStyles interface have attributes

(defined in this section) that control how text is laid out (rasterized or outlined) by the

object. Such objects can also have a font style source object. For

CanvasRenderingContext2D objects, this is the canvas element given by

the value of the context's canvas attribute. For

OffscreenCanvasRenderingContext2D objects, this is the associated

OffscreenCanvas object.

Font resolution for the font style source object requires a font

source. This is determined for a given object implementing

CanvasTextDrawingStyles by the following steps: [CSSFONTLOAD]

If object's font style source object is a canvas

element, return the element's node document.

Otherwise, object's font style source object is an

OffscreenCanvas object:

Let global be object's relevant global object.

If global is a Window object, then return global's

associated Document.

Assert: global implements

WorkerGlobalScope.

Return global.

This is an example of font resolution with a regular canvas element with ID c1.

const font = new FontFace( "MyCanvasFont" , "url(mycanvasfont.ttf)" );

documents. fonts. add( font);

const context = document. getElementById( "c1" ). getContext( "2d" );

document. fonts. ready. then( function () {

context. font = "64px MyCanvasFont" ;

context. fillText( "hello" , 0 , 0 );

}); In this example, the canvas will display text using mycanvasfont.ttf as

its font.

This is an example of how font resolution can happen using OffscreenCanvas.

Assuming a canvas element with ID c2 which is transferred to

a worker like so:

const offscreenCanvas = document. getElementById( "c2" ). transferControlToOffscreen();

worker. postMessage( offscreenCanvas, [ offscreenCanvas]); Then, in the worker:

self. onmessage = function ( ev) {

const transferredCanvas = ev. data;

const context = transferredCanvas. getContext( "2d" );

const font = new FontFace( "MyFont" , "url(myfont.ttf)" );

self. fonts. add( font);

self. fonts. ready. then( function () {

context. font = "64px MyFont" ;

context. fillText( "hello" , 0 , 0 );

});

}; In this example, the canvas will display a text using myfont.ttf.

Notice that the font is only loaded inside the worker, and not in the document context.

The font IDL attribute, on setting, must be parsed as a CSS <'font'> value (but

without supporting property-independent style sheet syntax like 'inherit'), and the resulting font

must be assigned to the context, with the 'line-height' component forced to 'normal',

with the 'font-size' component converted to CSS pixels,

and with system fonts being computed to explicit values. If the new value is syntactically

incorrect (including using property-independent style sheet syntax like 'inherit' or 'initial'),

then it must be ignored, without assigning a new font value. [CSS]

Font family names must be interpreted in the context of the font style source

object when the font is to be used; any fonts embedded using @font-face or loaded using FontFace objects that are visible to the

font style source object must therefore be available once they are loaded. (Each font style source

object has a font source, which determines what fonts are available.) If a font

is used before it is fully loaded, or if the font style source object does not have

that font in scope at the time the font is to be used, then it must be treated as if it was an

unknown font, falling back to another as described by the relevant CSS specifications.

[CSSFONTS] [CSSFONTLOAD]

On getting, the font attribute must return the serialized form of the current font of the context (with

no 'line-height' component). [CSSOM]

For example, after the following statement:

context. font = 'italic 400 12px/2 Unknown Font, sans-serif' ; ...the expression context.font would evaluate to the string "italic 12px "Unknown Font", sans-serif". The "400"

font-weight doesn't appear because that is the default value. The line-height doesn't appear

because it is forced to "normal", the default value.

When the object implementing the CanvasTextDrawingStyles interface is created, the

font of the context must be set to 10px sans-serif. When the 'font-size' component is

set to lengths using percentages, 'em' or 'ex' units, or the 'larger' or

'smaller' keywords, these must be interpreted relative to the computed value of the

'font-size' property of the font style source object at the time that

the attribute is set, if it is an element. When the 'font-weight' component is set to

the relative values 'bolder' and 'lighter', these must be interpreted relative to the

computed value of the 'font-weight' property of the font style

source object at the time that the attribute is set, if it is an element. If the computed values are undefined for a particular case (e.g. because

the font style source object is not an element or is not being

rendered), then the relative keywords must be interpreted relative to the normal-weight

10px sans-serif default.

The textAlign IDL attribute, on getting, must return

the current value. On setting, the current value must be changed to the new value. When the object

implementing the CanvasTextDrawingStyles interface is created, the textAlign attribute must initially have the value start.

The textBaseline IDL attribute, on getting, must

return the current value. On setting, the current value must be changed to the new value. When the

object implementing the CanvasTextDrawingStyles interface is created, the textBaseline attribute must initially have the value

alphabetic.

Objects that implement the CanvasTextDrawingStyles interface have an associated

language value used to localize font

rendering. Valid values are a BCP 47 language tag, the empty string, or "inherit" where the language comes from the

canvas element's language, or the associated document element when there

is no canvas element. Initially, the language must be "inherit".

The direction IDL attribute, on getting, must return

the current value. On setting, the current value must be changed to the new value. When the object

implementing the CanvasTextDrawingStyles interface is created, the direction attribute must initially have the value "inherit".

Objects that implement the CanvasTextDrawingStyles interface have attributes that

control the spacing between letters and words. Such objects have associated letter spacing and word spacing values, which are CSS

<length> values. Initially, both must be the result of parsing "0px" as a CSS

<length>.

CanvasRenderingContext2D/letterSpacing

Support in one engine only.

The letterSpacing getter steps are to return

the serialized form of this's letter spacing.

The letterSpacing setter steps are:

Let parsed be the result of parsing the given value as a CSS <length>.

If parsed is failure, then return.

Set this's letter spacing to parsed.

CanvasRenderingContext2D/wordSpacing

Support in one engine only.

The wordSpacing getter steps are to return the

serialized form of this's word spacing.

The wordSpacing setter steps are:

Let parsed be the result of parsing the given value as a CSS <length>.

If parsed is failure, then return.

Set this's word spacing to parsed.

CanvasRenderingContext2D/fontKerning

The fontKerning IDL attribute, on

getting, must return the current value. On setting, the current value must be changed to the new

value. When the object implementing the CanvasTextDrawingStyles interface is created,

the fontKerning attribute must

initially have the value "auto".

CanvasRenderingContext2D/fontStretch

Support in one engine only.

The fontStretch IDL attribute, on

getting, must return the current value. On setting, the current value must be changed to the new

value. When the object implementing the CanvasTextDrawingStyles interface is created,

the fontStretch attribute must

initially have the value "normal".

CanvasRenderingContext2D/fontVariantCaps

Support in one engine only.

The fontVariantCaps IDL attribute, on

getting, must return the current value. On setting, the current value must be changed to the new

value. When the object implementing the CanvasTextDrawingStyles interface is created,

the fontVariantCaps attribute must

initially have the value "normal".

CanvasRenderingContext2D/textRendering

Support in one engine only.

The textRendering IDL attribute, on

getting, must return the current value. On setting, the current value must be changed to the new

value. When the object implementing the CanvasTextDrawingStyles interface is created,

the textRendering attribute must

initially have the value "auto".

The textAlign attribute's allowed keywords are

as follows:

start

Align to the start edge of the text (left side in left-to-right text, right side in right-to-left text).

end

Align to the end edge of the text (right side in left-to-right text, left side in right-to-left text).

left

Align to the left.

right

Align to the right.

center

Align to the center.

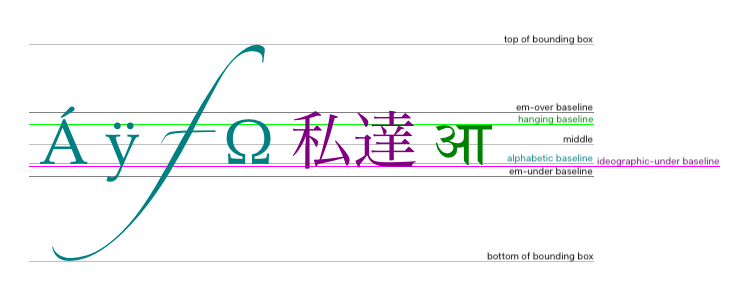

The textBaseline

attribute's allowed keywords correspond to alignment points in the

font:

The keywords map to these alignment points as follows:

top

hanging

middle

alphabetic

ideographic

bottom

The direction attribute's allowed keywords are

as follows:

ltr

Treat input to the text preparation algorithm as left-to-right text.

rtl

Treat input to the text preparation algorithm as right-to-left text.

inherit

Use the process in the text preparation algorithm to obtain the text

direction from the canvas element, placeholder canvas element, or

document element.

The fontKerning attribute's allowed keywords

are as follows:

auto

Kerning is applied at the discretion of the user agent.

normal

Kerning is applied.

none

Kerning is not applied.

The fontStretch attribute's allowed keywords

are as follows:

ultra-condensed

Same as CSS 'font-stretch' 'ultra-condensed' setting.

extra-condensed

Same as CSS 'font-stretch' 'extra-condensed' setting.

condensed

Same as CSS 'font-stretch' 'condensed' setting.

semi-condensed

Same as CSS 'font-stretch' 'semi-condensed' setting.

normal

The default setting, where width of the glyphs is at 100%.

semi-expanded

Same as CSS 'font-stretch' 'semi-expanded' setting.

expanded

Same as CSS 'font-stretch' 'expanded' setting.

extra-expanded

Same as CSS 'font-stretch' 'extra-expanded' setting.

ultra-expanded

Same as CSS 'font-stretch' 'ultra-expanded' setting.

The fontVariantCaps attribute's allowed

keywords are as follows:

normal

None of the features listed below are enabled.

small-caps

Same as CSS 'font-variant-caps' 'small-caps' setting.

all-small-caps

Same as CSS 'font-variant-caps' 'all-small-caps' setting.

petite-caps

Same as CSS 'font-variant-caps' 'petite-caps' setting.

all-petite-caps

Same as CSS 'font-variant-caps' 'all-petite-caps' setting.

unicase

Same as CSS 'font-variant-caps' 'unicase' setting.

titling-caps

Same as CSS 'font-variant-caps' 'titling-caps' setting.

The textRendering attribute's allowed

keywords are as follows:

auto

Same as 'auto' in SVG text-rendering property.

optimizeSpeed

Same as 'optimizeSpeed' in SVG text-rendering property.

optimizeLegibility

Same as 'optimizeLegibility' in SVG text-rendering property.

geometricPrecision

Same as 'geometricPrecision' in SVG text-rendering property.

The text preparation algorithm is as follows. It takes as input a string text,

a CanvasTextDrawingStyles object target, and an optional length

maxWidth. It returns an array of glyph shapes, each positioned on a common coordinate

space, a physical alignment whose value is one of left, right, and

center, and an inline box. (Most callers of this algorithm ignore the

physical alignment and the inline box.)

If maxWidth was provided but is less than or equal to zero or equal to NaN, then return an empty array.

Replace all ASCII whitespace in text with U+0020 SPACE characters.

Let font be the current font of target, as given

by that object's font attribute.

Let language be the target's language.

If language is "inherit":

Let sourceObject be object's font style source object.

If sourceObject is a canvas

element, then set language to the sourceObject's

language.

Otherwise:

Assert: sourceObject is an OffscreenCanvas object.

Set language to the sourceObject's inherited language.

If language is the empty string, then set language to explicitly unknown.

Apply the appropriate step from the following list to determine the value of direction:

direction attribute has the value "ltr"direction attribute has the value "rtl"direction attribute has the value "inherit"Let sourceObject be object's font style source object.

If sourceObject is a canvas

element, then let direction be sourceObject's

directionality.

Otherwise:

Assert: sourceObject is an OffscreenCanvas object.

Let direction be sourceObject's inherited direction.

Form a hypothetical infinitely-wide CSS line box containing a single inline box containing the text text, with the CSS content language set to language, and with its CSS properties set as follows:

| Property | Source |

|---|---|

| 'direction' | direction |

| 'font' | font |

| 'font-kerning' | target's fontKerning |

| 'font-stretch' | target's fontStretch |

| 'font-variant-caps' | target's fontVariantCaps |

| 'letter-spacing' | target's letter spacing |

| SVG text-rendering | target's textRendering |

| 'white-space' | 'pre' |

| 'word-spacing' | target's word spacing |

and with all other properties set to their initial values.

If maxWidth was provided and the hypothetical width of the inline box in the hypothetical line box is greater than maxWidth CSS pixels, then change font to have a more condensed font (if one is available or if a reasonably readable one can be synthesized by applying a horizontal scale factor to the font) or a smaller font, and return to the previous step.

The anchor point is a point on the inline box, and the physical

alignment is one of the values left, right, and center. These

variables are determined by the textAlign and

textBaseline values as follows:

Horizontal position:

textAlign is lefttextAlign is start and direction is

'ltr'textAlign is end and direction is 'rtl'textAlign is righttextAlign is end and direction is 'ltr'textAlign is start and direction is

'rtl'textAlign is centerVertical position:

textBaseline is toptextBaseline is hangingtextBaseline is middletextBaseline is alphabetictextBaseline is ideographictextBaseline is bottomLet result be an array constructed by iterating over each glyph in the inline box from left to right (if any), adding to the array, for each glyph, the shape of the glyph as it is in the inline box, positioned on a coordinate space using CSS pixels with its origin at the anchor point.

Return result, physical alignment, and the inline box.

Objects that implement the CanvasPath interface have a path. A path has a list of zero or

more subpaths. Each subpath consists of a list of one or more points, connected by straight or

curved line segments, and a flag indicating whether the subpath is closed or not. A

closed subpath is one where the last point of the subpath is connected to the first point of the

subpath by a straight line. Subpaths with only one point are ignored when painting the path.

Paths have a need new subpath flag. When this flag is set, certain APIs create a new subpath rather than extending the previous one. When a path is created, its need new subpath flag must be set.

When an object implementing the CanvasPath interface is created, its path must be initialized to zero subpaths.

context.moveTo(x, y)CanvasRenderingContext2D/moveTo

Support in all current engines.

path.moveTo(x, y)Creates a new subpath with the given point.

context.closePath()CanvasRenderingContext2D/closePath

Support in all current engines.

path.closePath()Marks the current subpath as closed, and starts a new subpath with a point the same as the start and end of the newly closed subpath.

context.lineTo(x, y)CanvasRenderingContext2D/lineTo

Support in all current engines.

path.lineTo(x, y)Adds the given point to the current subpath, connected to the previous one by a straight line.

context.quadraticCurveTo(cpx, cpy, x, y)CanvasRenderingContext2D/quadraticCurveTo

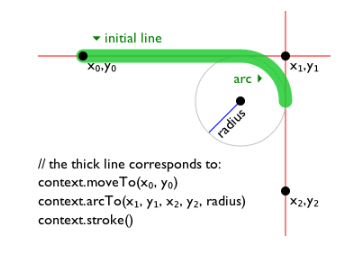

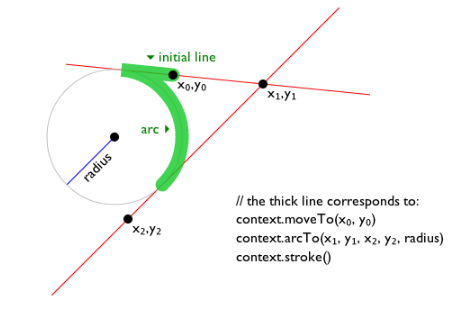

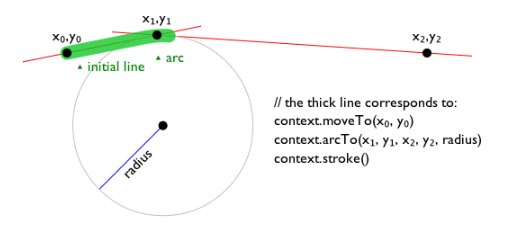

Support in all current engines.